In a book that Routledge will be publishing later this year, I have written a chapter outlining some key developments in academic research on homework. The chapter covers both quantitative and qualitative studies, specifically those endeavouring to measure the effect of homework on attainment. There is quite a bit of analysis of both Harris Cooper’s and John Hattie’s mammoth meta-analyses of homework as well as plenty of references to other types of study (see, for example, Cooper, 2007; Hattie, 2009). The chapter also discusses research on the ideal amount of time pupils should spend on homework and differences between the effect of homework on primary and secondary pupils. However, this blog will briefly expand on some of the meta-analyses I have looked at whilst researching, but do not analyse in-depth in the book (as there are plenty of other studies). Importantly, as pupils have now returned to school after another period of remote learning, homework will once again be set regularly by teachers. This blog simply argues that this old habit is worth continuing.

What are meta-analyses?

A meta-analysis is a quantitative review of research literature. Essentially, in education, a meta-analysis can look at anything from the impact of questioning on pupil progress to the effect of pastoral interventions on pupil wellbeing. A typical analysis will review published data sets or peer-reviewed studies, often catogrising data to make comparisons and calculate effect sizes. Therefore, an effect size tells us if interventions have any effect on the area of study. In the case of homework, this could include the effect of homework on attainment (for instance, do pupils have better attainment scores if homework is regularly completed than not completed). I will explain how effect sizes are reached below as the studies discussed depend on them. However, if you know all this, please skip this bit – it’s nothing remarkable.

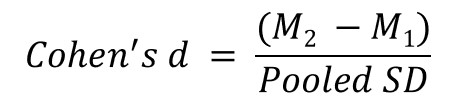

A common way of calculating a comparative effect size is through Cohen’s d method/formula. Here, the effect size is simply the standardised mean difference between two groups being studied; this could be the difference between test scores or something else measurable. Of course, the two groups will be differentiated by the subject being studied, such as whether homework is completed, weekly retrieval practice tasks are used, questioning is targeted etc. These comparisons work best when one group receives an intervention or strategy and another group does not.

In the equation below, M1 can be considered an ‘experimental group’ because the intervention or strategy being studied has been used whereas M2, on the other hand, is the ‘control group’ who have not experienced the intervention or strategy. To calculate Cohen’s d, a researcher would subtract the mean score of the control group from the mean score of the experimental group and divide by the ‘pooled’ standard deviation of both groups. The standard deviation is simply a measure of the spread of a set of values from lowest to highest.

Most of these calculations result in answers ranging from 0 to 1, but they can – of course – go higher. Subsequently, Cohen (1992) suggests that d = 0.2 should be seen as a ‘small’ effect size, 0.5 considered a ‘medium’ effect size and 0.8 a ‘large’ effect size. For example, an effect size of 0.8 means that the score of the average person in the experimental group is 0.8 standard deviations above the average person in the control group, which equates to a score 79% higher than those in the control group. Consequently, Cohen argues that effect sizes can be judged thus:

- d = 0 – 0.20 (weak effect)

- d = 0.21 – 0.50 (modest effect)

- d = 0.51 – 1.00 (moderate effect)

- d = 1.00+ (strong effect)

In a similar vein to comparative effect sizes, some researchers use correlation coefficients to calculate effect sizes. These look at the correlation of an intervention or strategy with an measurable increase or decrease in data, such as test scores, attendance or even wellbeing if quantified somehow. Researchers often use the ‘Pearson correlation coefficient’, which is basically a measure of linear correlation between two sets of data. The Pearson correlation is typically calculated using the following formula:

Here, r is the correlation coefficient, N is the number of pairs of scores, ∑xy is the sum of the products of paired scores, ∑x is the sum of x scores, ∑y is the sum of y scores, ∑x2 is the sum of squared x scores and, lastly, ∑y2 is the sum of squared y scores. In most cases the resulting value of the effect size varies between -1 to +1, but in order to make sense of this value Cohen, Manion and Morrison (2007) suggest that effect sizes can be judged as:

- r = 0 – 0.1 (weak effect)

- r = 0.2 – 0.3 (modest effect)

- r = 0.4 – 0.5 = (moderate effect)

- r = 0.6 – 0.8 (strong effect)

- r = 0.8+ (very strong effect)

A fair number of meta-analyses have been conducted on homework, spanning a broad range of methodologies and levels of specificity, as well as using effect sizes based on standard deviation or correlation coefficients. Some of the most significant studies are compared in my book (particularly those by Harris Cooper and colleagues as well as John Hattie), but I will further explain some of the less regularly cited studies below.

Studies from the 1980s and 1990s

Although dated, one of the first meta-analyses carried out on homework and attainment was by Graue, Weinstein and Walberg (1983). This analysis reviewed 29 controlled studies published between 1970 and 1980 and showed that homework can have a positive effect on attainment. The authors found that of the 121 comparisons made between groups given homework and those not given homework, 109 showed that homework had an impact on pupil attainment; giving an overall effect size of d = 0.50, which indicates a ‘modest effect’. However, one caveat here is that the research also examined school-based programmes aimed at increasing educationally-stimulating home environments for pupils at elementary (primary) school; this means that the study had a wider context on promoting the right environment for completing homework away from school.

A year later, Paschal, Weinstein and Walberg, (1984) published a more general study titled Homework’s Powerful Effect on Learning. This study focused on US and Canadian studies reported between 1964 and 1981 and looked at pupils who had been set homework and those that had not at both elementary (primary) school and high school (Key Stage 4 and 5 if in the UK; hereafter KS4 and KS5). The overall effect size stood at d = 0.36, which suggests setting homework has a modest effect on attainment. Within the 15 studies, 81 comparisons were made between groups receiving and not receiving homework and the authors suggest 85% of these comparisons showed a favourable advantage for pupils receiving homework assignments. Despite admitting that standards of homework would have varied, Paschal, Weinstein, and Walberg state, ‘Thus, both the magnitude and consistency of homework effect is substantial’ (ibid., p. 76).

Moreover, it is worth mentioning that Walberg, who was involved in both of the previously discussed analyses, completed another often cited meta-analysis in 1999 that found 47 studies indicating that homework has a positive effect on attainment (Walberg, 1999; cited in Cooper, 2007). Importantly, Walberg writes that the impact of homework is ‘almost tripled when teachers take time to grade the work, make corrections and specific comments on improvements that can be made’ (Walberg, 1999, p. 9). In a nod to the current trend towards whole class feedback (for example, see Christodoulou, 2020), Walberg not only highlights the importance of discussing problems and solutions with individual pupils, but also the whole class. In this analysis, he concludes, ‘Homework also seems particularly effective in secondary school’ (Walberg, 1999, p. 9). This last point becomes a theme of the meta-analyses on homework.

Studies completed in the last 5 years

Other meta-analyses, such as one by Fan, Xu, Cai and Fan (2017), have found that time spent on homework has a positive correlation with attainment. Their analysis, which synthesised research conducted over a 30 year period from 1986 to 2016, examined the relationship between homework and attainment in maths and science. Their conclusions revealed a small but positive relationship between homework and academic attainment in the subjects covered. They also looked at the frequency of homework set and effort spent on completing it as well as various other factors that could have affected pupils’ approach to homework. Their main effect sizes of interest included:

- frequency of homework: r = 0.12 (weak effect)

- time spent on homework: r = 0.15 (weak effect)

- effort given to homework: r = 0.31 (modest effect)

- completion of homework: r = 0.59 (moderate effect)

- elementary (primary) school: r = 0.36 (modest effect)

- middle school school (KS3): r = 0.14 (weak effect)

- high school (KS4/5): r = 0.3 (modest effect).

One finding to note was that time spent doing homework and its frequency were less impactful, in terms of effect sizes, than completion and effort, which had a larger effect. Interestingly, and unlike many other studies, they found that the attainment relationship in maths and science was stronger for elementary (primary) pupils than for middle school (KS3) pupils, whereas high school (KS4/5) pupils still showed a positive relationship. One suggestion put forward by the authors for the modest effect between homework and attainment for elementary pupils is that maths assignments were often short whilst other subjects were longer; this may account for differences with other analyses as their study focused on maths and science only. Lastly, the analysis also showed the effect to be more positive amongst US pupils than Asian pupils.

In the same year, Baş, Şentürk and Ciğerci (2017) completed a meta-analysis that sought to consider various aspects of homework, including different subjects, grade levels, the duration of implementation, the level of instruction, socioeconomics and assignment. Importantly, Baş, Şentürk and Ciğerci’s analysis mirrors that of Cooper’s (1989a; 1989b) first synthesis of studies in his 1989 meta-analysis in terms of grade level/year group, which found high school pupils benefited more from homework. Baş, Şentürk and Ciğerci found that pupils in grade 9 and over benefited more from homework than younger pupils. For instance:

- grades 1-4 (years 2-5): d = 0.206 (weak effect)

- grades 5-8 (years 6-9): d = 0.412 (modest effect)

- grades 9+ (years 10 and above): d = 0.479 (modest effect).

Unlike Fan, Xu, Cai and Fan (2017), these effect sizes are higher for older year groups, particularly for the middle grades, but whilst this reinforces Cooper’s first major synthesis on homework as well as other studies, we should not forget that Cooper’s third major synthesis of homework studies showed slightly different results (again, this study will be covered further in my book, but the actual studies can be found easily on the Internet). If we are, however, to see trends in the research pointing to the effect of homework for older pupils and students, Baş, Şentürk and Ciğerci’s meta-analysis is useful as it also looks at differences that homework has on attainment between elementary (primary) school, high school (KS3/4) and higher education. For instance:

- elementary (primary) schools: d = 0.15 (weak effect)

- high schools (mostly KS3/4): d = 0.48 (modest effect)

- higher education: d = 0.45 (modest effect).

It is interesting that effect sizes do not increase for higher education, despite the assumption that older pupils are more inclined or willing to partake in homework. Nonetheless, the number of studies included here were quite low and included only 2 for the higher education settings.

Baş, Şentürk and Ciğerci’s findings suggest that 64% of the research studies included in their analysis showed a positive effect of homework on academic attainment. However, the authors also found that whilst there was a general positive relationship here, some of the studies they examined found that homework ‘may have partial effects on academic success in certain courses, exams or classroom grades’ (ibid.), which means homework is no silver bullet to academic success per se. In terms of subjects, they found that combined science and chemistry were positively correlated with homework, but that maths was not (at r = 0.567 and r = 0.8 respectively).

Brief conclusion

Despite some mild discrepancies, all of the meta-analyses mentioned above suggest that homework impacts on attainment to some extent. Although the effect sizes for most are small, I do not think they should be discounted. Granted, we know that there are various competing variables affecting any straightforward conclusions here, but if these small effects are being detected, we must assume that homework is still a worthy pursuit.

For more see – Homework with Impact: Why what you set and how you set it matters (to be published in the Autumn).

References

Bas, G., Senturk, C., & Ciğerci, F.M. (2017). Homework and academic achievement: A meta-analytic review of research. Issues in Educational Research, 27(1), 31-50.

Cohen, J. (1992). Statistical power analysis. Current Directions in Psychological Science, 1(3), 98–101.

Cohen, L., Manion, L., & Morrison, K. (2007). Research methods in education (6th ed.). Abingdon: Routledge.

Cooper, H. (1989a). Homework. White Plains, NY: Longman.

Cooper, H. (1989b). Synthesis of research on homework. Educational Leadership, 47(3), 85-91.

Cooper, H. (2007). The battle over homework: common ground for administrators, teachers, and parents. Thousand Oaks, CA: Corwin Press.

Christodoulou, D. (2019, 19 March). Whole-class feedback: saviour or fad? No More Marking blog. Retrieved from: https://blog.nomoremarking.com/whole-class-feedback-saviour-or-fad-5c54c463a4d0

Fan, H., Xu, J., Cai, Z., He, J., & Fan, X. (2017). Homework and students’ achievement in math and science: A 30-year meta-analysis, 1986–2015. Educational Research Review, 20, 35-54.

Graue, M. E., Weinstein, T., & Walberg, H. J. (1983). School-based home instruction and learning: A quantitative synthesis. The Journal of Educational Research, 76(6), 351–360.

Hattie, J. (2009). Visible learning: A synthesis of over 800 meta-analyses relating to achievement. London: Routledge.

Paschal, R. A., Weinstein, T., & Walberg, H. J. (1984). The effects of homework on learning: A quantitative synthesis. The Journal of Educational Research, 78(2), 97–104.

Walberg, H.J. (1999). Productive teaching. In H.C. Waxman & H.J. Walberg (Eds.), New directions for teaching practice and research. Berkeley, CA: McCutchen Publishing Corporation.

Photo credit: Pixa Hive (used under a Creative Commons Licence)